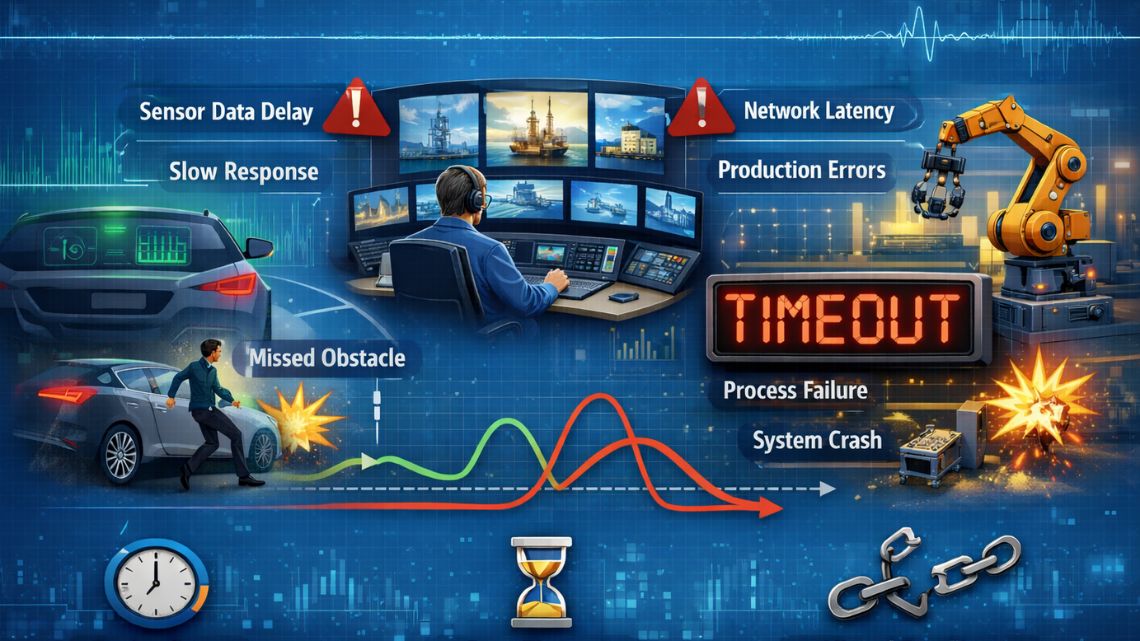

Latency is often treated as a performance metric, something to optimize for speed and efficiency. In modern engineering systems, it has taken on a more critical role. Latency is no longer just about responsiveness; it is increasingly a determinant of system stability and reliability. In environments where decisions are made in milliseconds, delays are not neutral, they alter system behavior.

Across industrial automation, power systems, robotics, and digital infrastructure, latency is emerging as a primary engineering constraint. Systems that appear functionally correct can fail operationally because they respond too late.

From Performance Metric to Failure Mechanism

Traditional engineering design focuses on loads, stresses, and capacities. Time delays were often secondary considerations, particularly in systems where processes evolved slowly. Today’s systems operate at speeds where even small delays influence outcomes.

In control systems, latency introduces phase lag between measurement and response. When feedback arrives too late, corrective actions may be applied to conditions that no longer exist. This creates oscillations, instability, or degraded performance. What appears as a minor delay at the component level can become a systemic issue when propagated across interconnected systems.

Latency in Control Systems and Automation

Modern control systems rely on continuous feedback loops. Sensors capture system state, controllers process this data, and actuators respond. The effectiveness of this loop depends on timing consistency.

When latency increases or becomes unpredictable, control accuracy declines. Systems may overcorrect or undercorrect, leading to inefficiencies or instability. In high-speed manufacturing, this can affect precision and quality. In robotics, it can impact motion control and coordination. As automation becomes more adaptive and data-driven, latency variability becomes as important as latency magnitude. Systems must be designed to operate reliably not only under low delay conditions, but under fluctuating timing.

Distributed Systems and Network-Induced Delays

Engineering systems are increasingly distributed. Data is transmitted across networks, processed at different locations, and returned as control signals. This introduces communication latency that varies based on network conditions.

In cloud-connected systems, decisions may depend on data traveling between physical infrastructure and remote processing environments. While this enables advanced analytics and optimization, it also introduces delays that are difficult to predict and control.

To address this, many systems are shifting toward edge computing, where processing occurs closer to the source of data. This reduces latency but introduces additional complexity in system architecture and coordination. The trade-off between centralized intelligence and localized responsiveness is now a key engineering decision.

Latency in Power and Energy Systems

Power systems are particularly sensitive to timing. Grid stability depends on maintaining balance between supply and demand in real time. As renewable energy sources increase, variability in generation adds complexity to system control.

Automated grid management systems must respond rapidly to fluctuations. Delays in sensing or control actions can lead to frequency deviations or instability. As systems become more interconnected, latency in communication between grid components becomes a critical factor. Engineers must design control strategies that remain stable even when communication delays are present.

Data Processing and Decision Delays

In data-driven systems, latency is not limited to physical transmission. Processing time also contributes to delays. Advanced analytics, machine learning models, and optimization algorithms require computation time, which can affect responsiveness.

While these tools improve decision quality, they can introduce delays that impact system behavior. Engineers must balance decision accuracy with timeliness. A more accurate decision that arrives too late may be less effective than a faster, approximate response. This balance is increasingly relevant in systems that rely on predictive or adaptive control.

Interdependence and Cascading Effects

Latency rarely acts in isolation. In interconnected systems, delays in one subsystem can influence others. A delayed signal may trigger a response that affects downstream processes, creating a chain of reactions.

These cascading effects can amplify small delays into larger system disruptions. The challenge is not only to minimize latency, but to understand how delays propagate through the system. Engineering analysis must therefore consider timing as a system-wide property rather than a local parameter.

Designing for Latency Tolerance

Given that latency cannot be eliminated entirely, systems must be designed to tolerate it. This involves several engineering strategies.

Buffering and smoothing techniques can absorb short-term delays. Predictive control methods can estimate system behavior and compensate for expected latency. Redundancy and fallback mechanisms ensure that critical functions continue even when communication is disrupted. Importantly, systems must be tested under realistic conditions, including variable latency scenarios. Performance under ideal conditions does not guarantee stability in real operation.

Operational Relevance

The importance of latency is increasing as systems become faster, more connected, and more automated. Industries are pushing for real-time performance while relying on distributed architectures and data-driven control.

This combination makes latency a defining constraint. Systems that ignore timing effects risk instability, inefficiency, and failure, even when all components meet their specifications. Engineers must therefore treat latency as a fundamental design parameter, not an afterthought.

System-Level Perspective

Latency is not simply a technical detail. It is a structural characteristic of modern engineering systems. Its effects are shaped by system architecture, communication pathways, and control strategies.

As engineering systems continue to evolve, the ability to manage timing, across components, networks, and processes, will become increasingly important. Reliability will depend not only on what systems do, but on when they do it. Understanding latency as a failure mode shifts engineering focus from static performance to dynamic behavior. In doing so, it aligns design practice with the realities of modern, real-time systems.